Mirco Ravanelli

Biographie

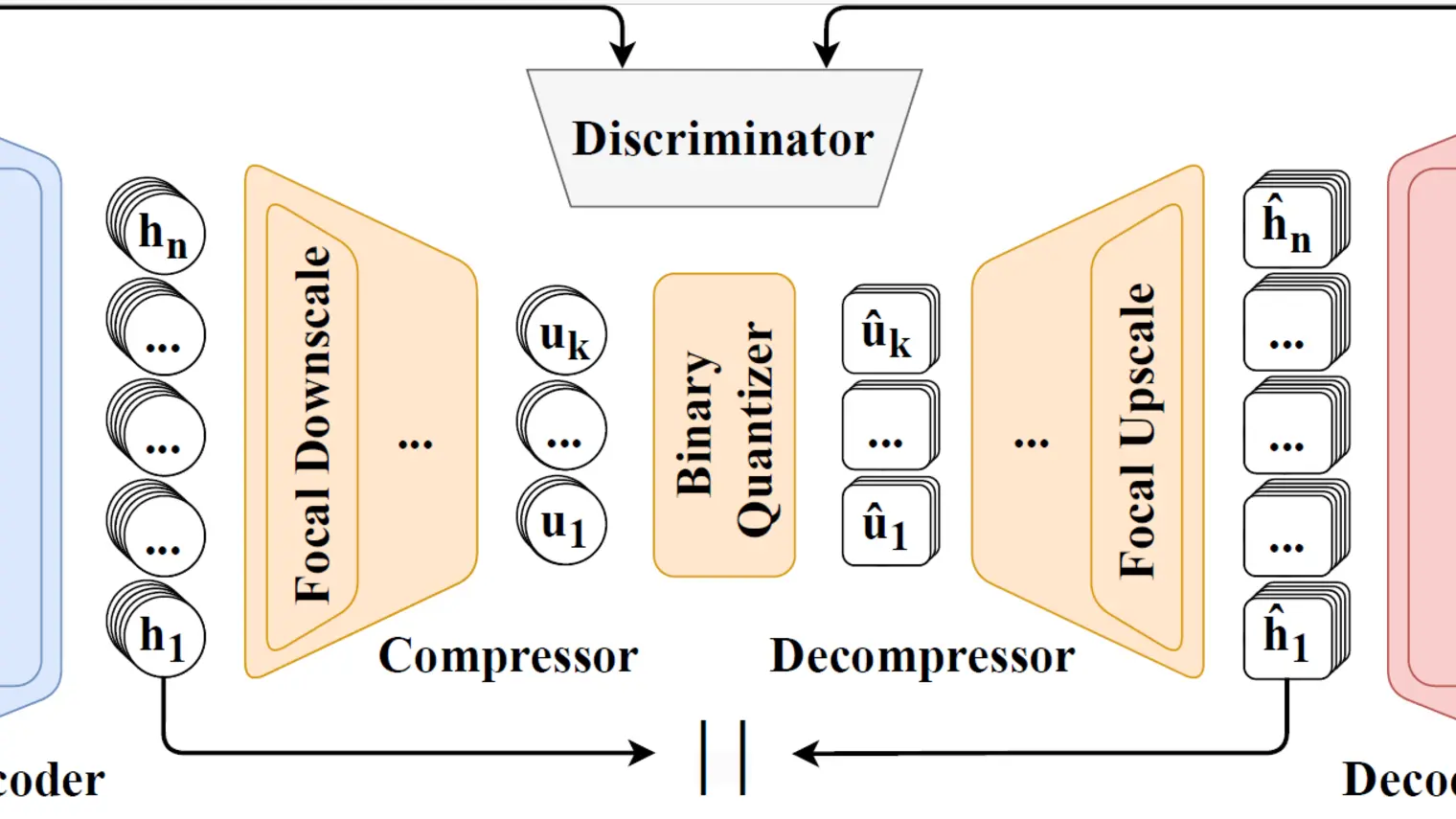

Mirco Ravanelli est professeur adjoint à l'Université Concordia, professeur associé à l'Université de Montréal et membre associé de Mila – Institut québécois d’intelligence artificielle. Lauréat du prix Amazon Research 2022, il est expert en apprentissage profond et en IA conversationnelle, et a publié plus de 60 articles dans ces domaines. Il se concentre principalement sur les nouveaux algorithmes d'apprentissage profond, y compris l'apprentissage autosupervisé, continu, multimodal, coopératif et économe en énergie. Mirco Ravanelli a effectué son postdoctorat à Mila, sous la direction du professeur Yoshua Bengio. Il est notamment le fondateur et le chef de file de SpeechBrain, l'une des boîtes à outils en code source ouvert les plus largement adoptées dans le domaine du traitement de la parole et de l'IA conversationnelle.