Mirco Ravanelli

Biography

Mirco Ravanelli is an assistant professor at Concordia University, adjunct professor at Université de Montréal and associate member of Mila – Quebec Artificial Intelligence Institute.

Ravanelli is an expert in deep learning and conversational AI, publishing over sixty papers in these fields. His contributions were honoured with a 2022 Amazon Research Award.

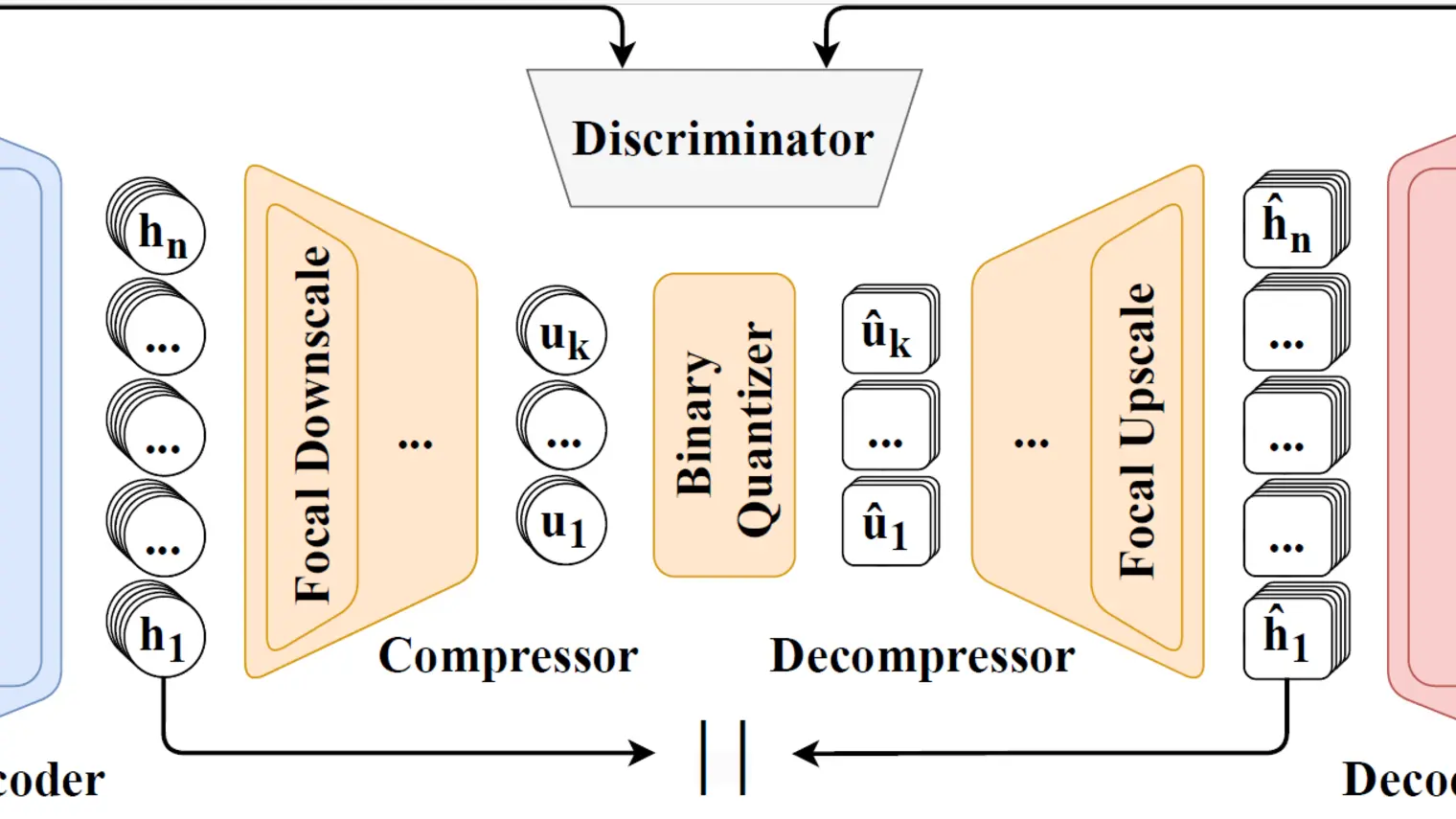

His research focuses primarily on novel deep learning algorithms, including self-supervised, continual, multimodal, cooperative and energy-efficient learning.

Formerly a postdoctoral fellow at Mila under Yoshua Bengio, he founded and now leads SpeechBrain, one of the most extensively used open-source toolkits in the field of speech processing and conversational AI.