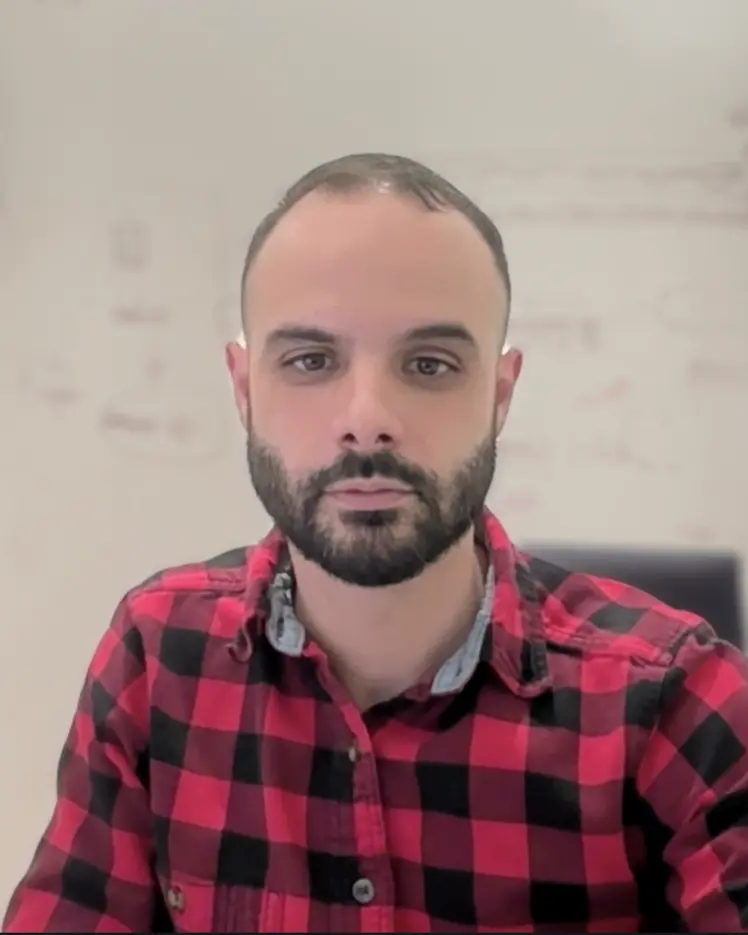

David Vázquez

Membre industriel associé

Professeur associé, Polytechnique Montréal, Département d'informatique et de génie logiciel

ServiceNow

Sujets de recherche

Apprentissage de représentations

Apprentissage multimodal

Apprentissage profond

Grands modèles de langage (LLM)

IA conversationnelle

Modèles génératifs

Vision par ordinateur