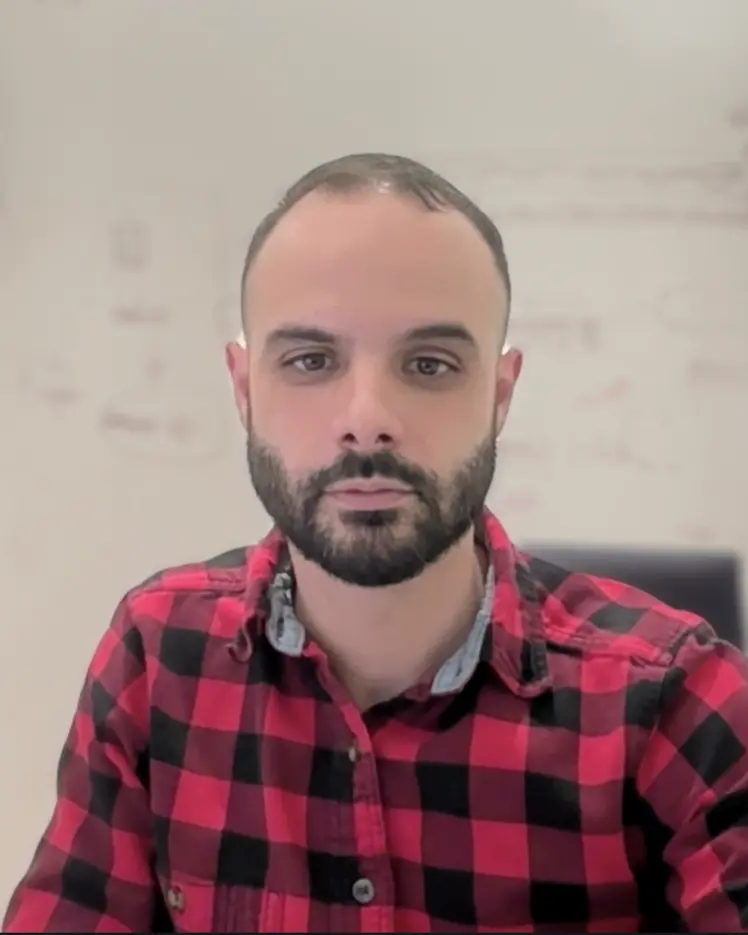

David Vázquez

Associate Industry Member

Adjunct Professor, Polytechnique Montréal, Department of Computer Engineering and Software Engineerin

ServiceNow

Research Topics

Computer Vision

Conversational AI

Deep Learning

Generative Models

Large Language Models (LLM)

Multimodal Learning

Representation Learning