This year, Mila partnered with the World Summit AI conference in Montréal to highlight our researchers’ work in front of a diverse audience. On April 19 and 20, 2023, nine of our professors shared their expertise and perspectives on the current state of artificial intelligence (AI) research at the Palais des congrès in Montréal.

Main stage

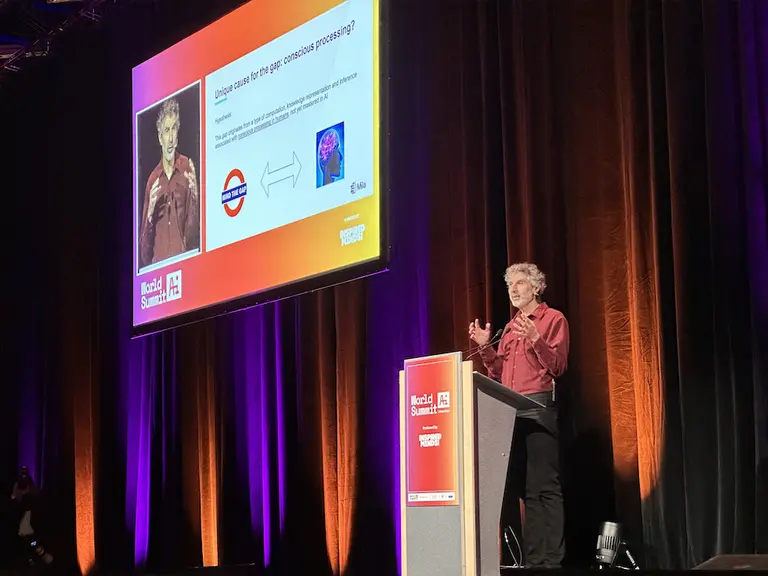

Yoshua Bengio kicked off the Summit by speaking about what is missing from current AI and how we may bridge the gap. He explained that current AI systems like ChatGPT lack key elements –including the ability to reason or adapt to new situations like a human being– and how his lab’s most recent work could help build better models. He also called for a better governance of AI in the face of unprecedented technological change.

The next day, Joëlle Pineau talked about the rapid emergence of generative AI tools and her recent work to develop a system to build flexible representation of objects (Segment Anything), which could be used to count cells in medical imagery or the distribution of trees and animals in a habitat. She emphasized the need to keep science open for the benefit of all in the face of an increasingly competitive innovation ecosystem.

Mirco Ravanelli doubled down on this idea by presenting SpeechBrain, an open-source toolkit for conversational AI that can be used for a wide range of applications such as speech recognition, language modeling, speech synthesis, and dialogue. He underlined the need to make technology more accessible, prevent the concentration of power in too few hands, and to provide “recipes” to be able to replicate models from scratch.

Aishwarya Agrawal described her work to advance multimodal vision-language learning. She explained the need for combining vision and language to strengthen AI systems in order to better emulate human learning and presented recent tools to describe and gather context from images.

Simon Lacoste-Julien spoke about causal representation learning and the importance of modeling causal relationships to obtain more robust machine learning models. He presented a method that can automatically learn the high level variables (such as the position of objects in an image) and their causal relationships, just from image data, without any supervision, thus opening the door for robots to make sense of their environment.

Deep dive tech talks

Golnoosh Farnadi detailed a pathway to developing responsible AI systems. She described the different types of algorithmic harms and explained how to ensure fairness along the models’ development and deployment pipeline.

Tal Arbel shared the promise of AI for personalized medicine based on medical images. She emphasized the need to have trustworthy AI systems for clinical deployments, and focused on the context of neurological diseases.

Danilo Bzdok gave insights on machine learning paradigms towards single subject prediction. He detailed his work to fine-tune pre-trained models with written reports to make them for instance emulate a clinician's intuition on predicting autism in young patients.

Dhanya Shridhar spoke about her work to draw causal inference from text with machine learning. She explained how it can lead to better decision-making and shared techniques to draw causal inference from text, for instance by studying characteristics of scientific articles -including their titles- to find what makes them accepted or not.