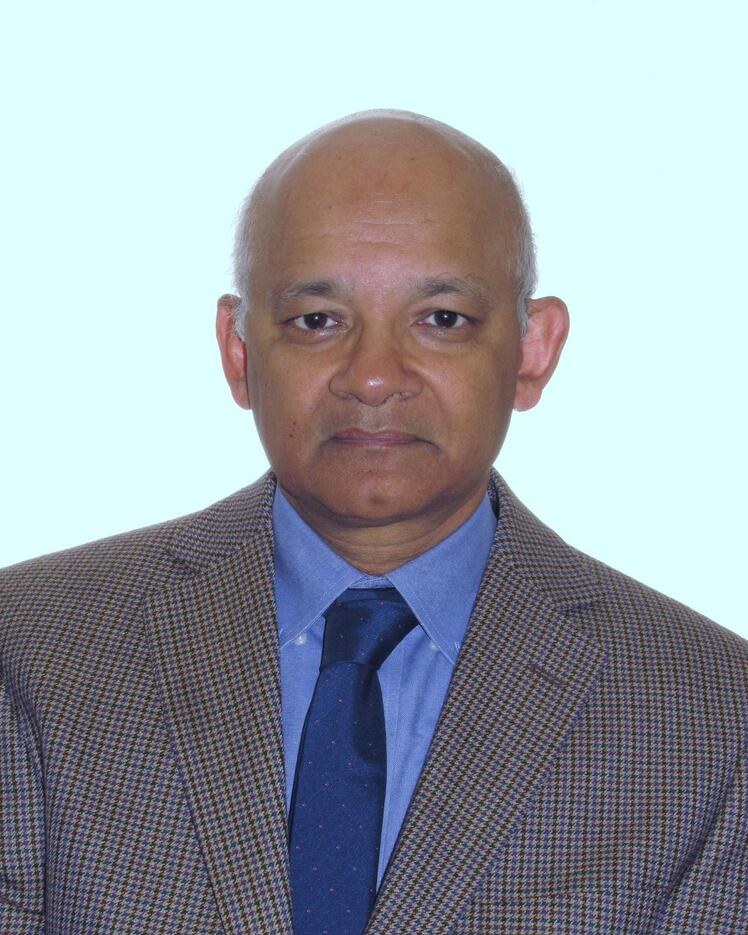

Prakash Panangaden

Biographie

Prakash Panangaden a étudié la physique à l'Indian Institute of Technology de Kanpur, en Inde. Il a obtenu une maîtrise en physique de l'Université de Chicago, où il a étudié l'émission stimulée des trous noirs. Il a ensuite obtenu un doctorat en physique de l'Université du Wisconsin-Milwaukee, dans lequel il s’est penché sur la théorie quantique des champs dans un espace-temps courbe. Il a été professeur adjoint d'informatique à l'Université Cornell, où il a principalement travaillé sur la sémantique des langages de programmation concurrents. Depuis 1990, il travaille à l'Université McGill. Au cours des 25 dernières années, il s'est intéressé à de nombreux aspects des processus de Markov : équivalence des processus, caractérisation logique, approximation et métrique. Récemment, il a travaillé sur l'utilisation des métriques pour améliorer l'apprentissage des représentations. Il a également publié des articles sur la physique, l'information quantique et les mathématiques pures. Il est membre de la Société royale du Canada et de l'Association for Computing Machinery (ACM).